There is an age-old problem in multidisciplinary teams that require integration of software, mechanical and electrical components. The process of designing the mechanical and electrical systems often hinders the development of the software systems involved. In my experience in the University of Waterloo Mars Rover team, this is an issue I personally had faced before and was keen on avoiding during the development of Centaur.

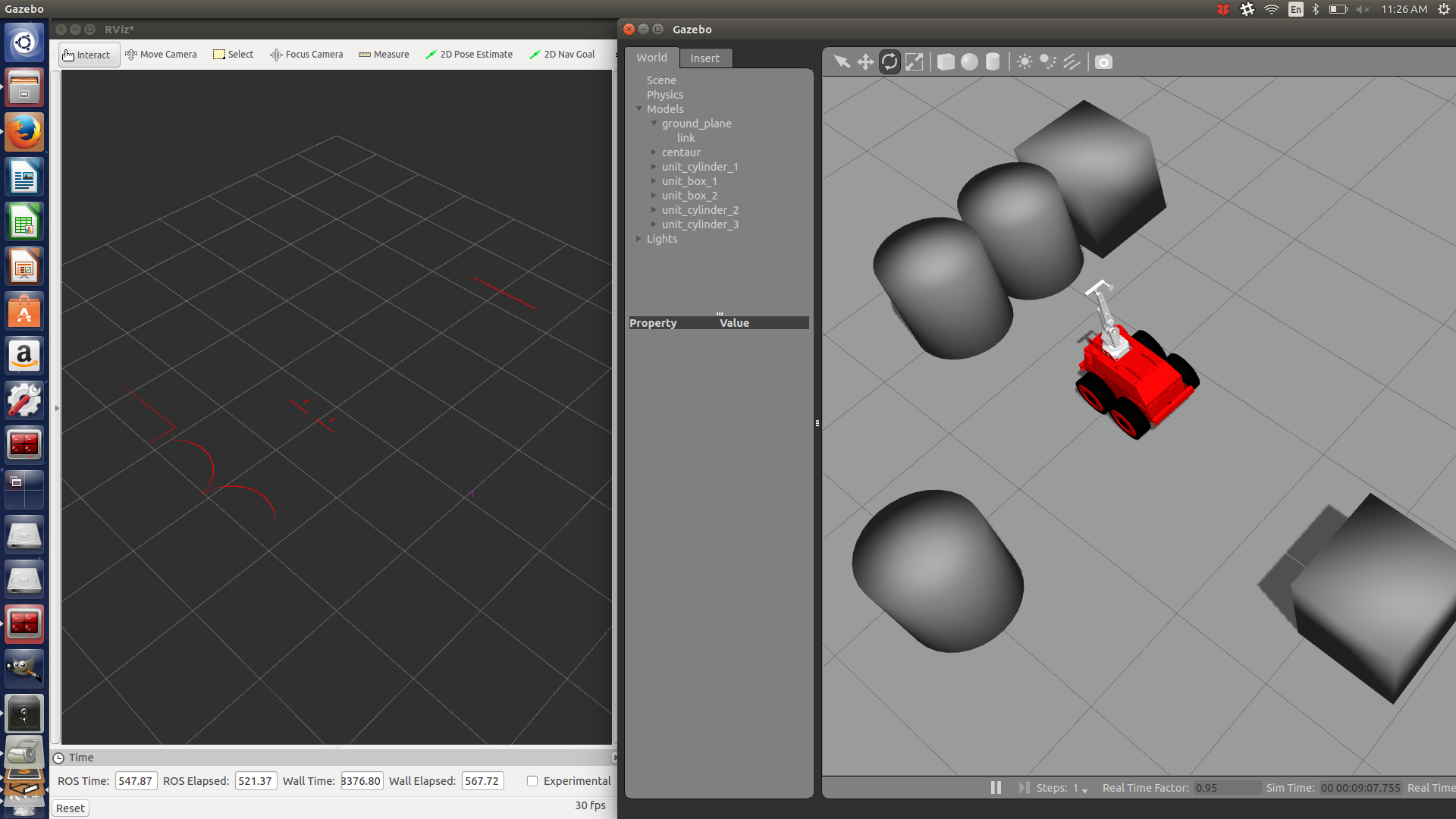

As soon as Project Centaur was kicked-off, Wesley and I discussed using the Gazebo Sim tool provided in the standard ROS installation, such that we could simulate the mechanical apparatus and the sensor systems that will be found on the real robot. The existence of the simulated robot would allow for the parallel development of a lot of the software that will be live on our robot before the robot is actually built.

By importing the appropriate STL files and coding the physical properties of our robot using XML markup ( the language of the robot description files), we were able to produce a simulated robot in a short time span. Initially, my task was to outfit the robot with sensors that would allow the operator to move the robot in the boiler rooms without crashing into the robot and validate their positioning. It was decided that two LIDARs in the front and back of the robot would allow for accurate detection of the objects surrounding the robot. The back LIDAR specifically would safely allow for the robot to be reversed by the operator.

Using the simulation and pulling the open source packages available for both the Hokuyo and the SICK-LMS, I was able to not only simulate the physical model of the sensors, but their sensory output as well. Both LIDARs were publishing the appropriate scan topics that can be used for easy interfacing with our software.

Tweaking was involved originally in getting the sensors to physically appear as they would in the real robot. This required knowledge of how tf trees (short for transform trees) work in ROS. However, once the sensors were put in their appropriate placements, I was able to start working on the drive safety system that will eventually be used to override operator commands if there is a dangerous object in the way of the robot. Since the drive safety system will be heavily reliant on the front and back LIDAR data, I have been able to use the simulation to test out my code by reading simulated scan data.

For now, the simulation has served its purpose. It will often be reused when it is inconvenient to access the real robot which is constantly in development and has hence, become a handy tool to possess. With the first iteration of the drive safety system nearing completion, I look forward to implementing the safety system on the real robot.